Hpe simplivity oac card failure10/27/2022

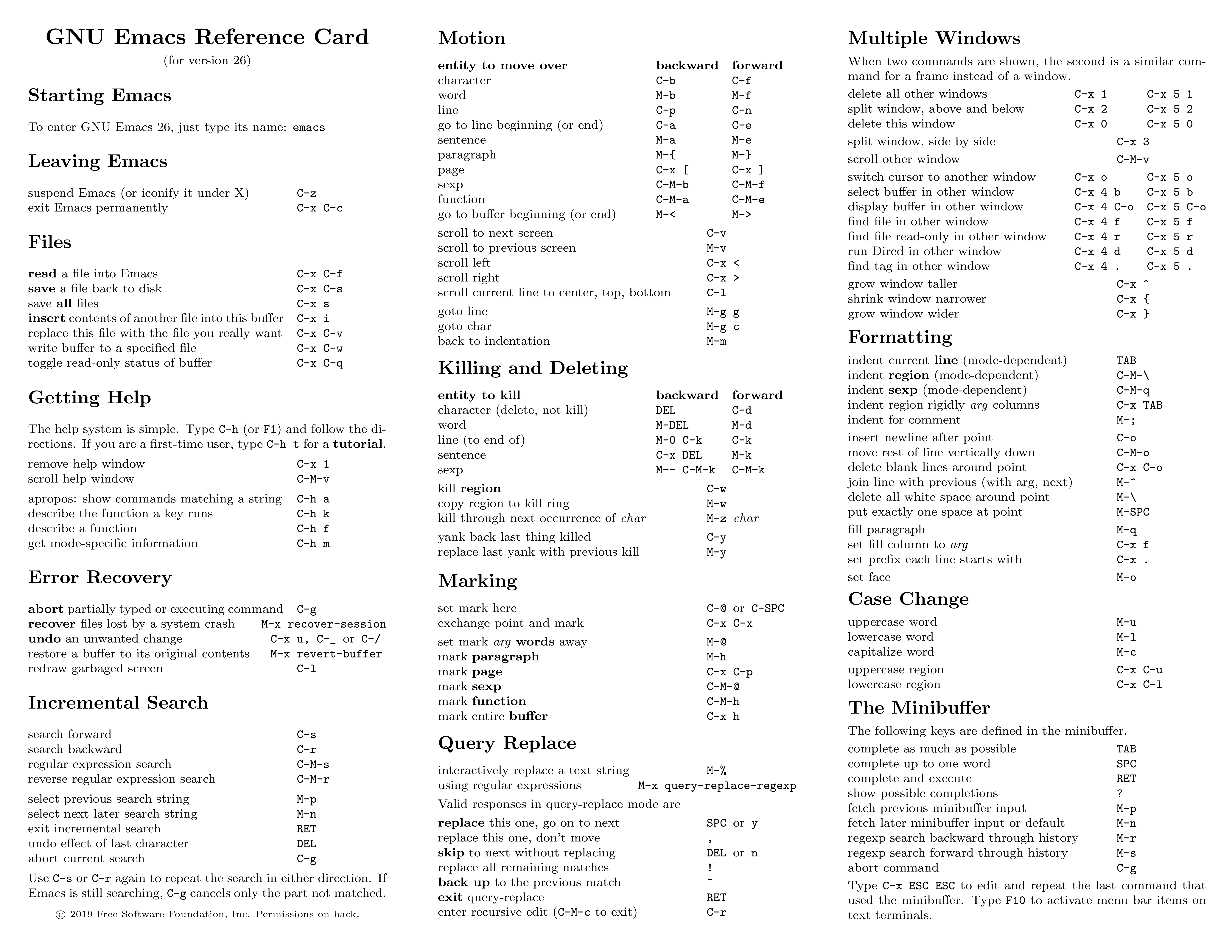

Writes are never written to RAM or any other non persistent media and at any stage you can pull the power from a Nutanix node/block/cluster and 100% of the data will be in a consistent state. Nutanix – Writes are only acknowledged to the Virtual Machine when the write IO has been checksummed and confirmed written to persistent media (e.g.: SATA-SSD) on the number of nodes/drives based on the configured Resiliency Factor (RF). This can be confirmed on of the SimpliVity Hyperconverged Infrastructure Technology Overview: HPE will surely argue the OAC is persistent, but until the data is on something such as a SATA-SSD drive I do not consider it persistent and invite you to ask your trusted advisor/s their option because this is a grey area at best. It is assumed or even likely that the capacitors will provide sufficient power to commit the writes persistently to flash but the fact is that writes are acknowledged BEFORE it is committed to persistent media. When a write hits the OAC it is then acknowledged to the VM. HPE Simplivity – They use what they refer to as an Omnistack Accelerator card (OAC) which uses “Super capacitors to provide power to the NVRAM upon a power loss”. When is a write acknowledged to the Virtual machine Note: The below information is accurate to the best of my knowledge and testing, experience with both products. Lets talk about some failure scenarios comparing HPE Simplivity to Nutanix. RF2 is often mistakenly compared to RAID 5, where a single drive failure takes a long time to rebuild and subsequent failures during rebuilds are not uncommon which would lead to a data loss scenario (for RAID 5). None the less, HPE Simplivity are commonly targeting Resiliency Factor 2 (RF2) and claiming it not to be resilient because they lack a basic understanding of the Acropolis Distributed Storage Fabric and how it distributes data, rebuilds from failures and therefore how resilient it is. Those rare situations have came down to multiple concurrent failures at different levels of the solution (e.g.: Infrastructure, Application, OS etc), not just things like one or more drive or server failures. From poor design, improper use of a product, poor implementation/validation and a lack of operations procedures or discipline to follow procedures, the number of times I’ve seen properly designed solutions have issues I can count on one hand. To start with, the biggest causes of data loss, downtime, outages etc in my experience are caused by human error.

#HPE #HPE #HyperConverged 380 #HPEDare2Compare #Nutanix #HPEDiscover /HDlW2ygwlF In part 3, I corrected HPE on their false claim that Nutanix cannot support dedupe without 8vCPUs and in part 4, I will respond to the claim (below) that Nutanix has less resiliency than HPE Simplivity 380. In part 2, I explained how HPE/Simplivity’s 10:1 data reduction HyperGuarantee is nothing more than smoke and mirrors and that most vendors can provide the same if not greater efficiencies, even without hardware acceleration. As discussed in Part 1, we have proven HPE have made false claims about Nutanix snapshot capabilities as part of the #HPEDare2Compare twitter campaign.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed